A look at how Java 17 stacks up against Kotlin and Scala for development teams in 2021, and an overview of popular JVM technologies.

What does Java look like in 2021? An overview of Java 17, the latest LTS Java release, including Records, Sealed Classes, and Pattern Matching.

Implementing super-convergence for deep neural network training in Tensorflow 2 with the 1Cycle learning rate policy.

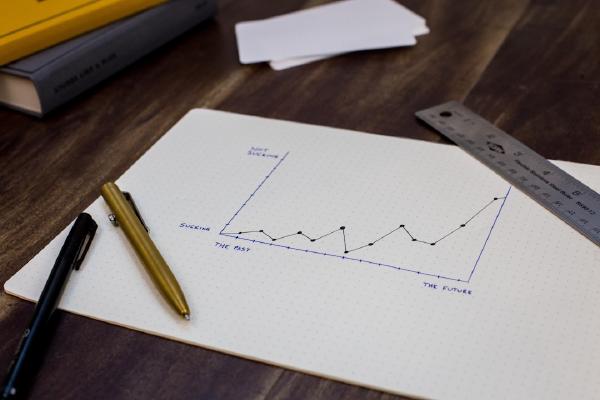

Implementing the technique in Tensorflow 2 is straightforward. Start from a low learning rate, increase the learning rate and record the loss. Stop when a very high learning rate is reached. Plot the losses and learning rates choosing a learning rate where the loss is decreasing at a rapid rate.

An end-to-end example of how to create your own image dataset from scratch and train a ResNet50 convolutional neural network for image classification using the FastAI library.

Instructions for installing FastAI v1 within a freshly created Anaconda virtual environment.

This post will cover getting started with FastAI v1 at the hand of tabular data. It is aimed at people that are at least somewhat familiar with deep learning, but not necessarily with using the FastAI v1 library.

A few years ago I came across a method for reading academic papers which I’ve kept coming back to as a reliable systematic approach to efficiently read important papers of varying complexity.

The method itself comes from a paper by Prof. Srinivasan Keshav, an ACM Fellow and researcher at the University of Waterloo. I recommend reading his paper, but I summarise the system here.

This post gives an overview of LightGBM and aims to serve as a practical reference. A brief introduction to gradient boosting is given, followed by a look at the LightGBM API and algorithm parameters.

A key concern when dealing with cyclical features is how we can encode the values such that it is clear to the deep learning algorithm that the features occur in cycles.

This post looks at a strategy to encode cyclical features in order to clearly express their cyclical nature.